There is a precise moment when a game stops being just a game. We found it in Sanremo, in the middle of an experiment with Gemini, Google’s artificial intelligence model, while we were trying to understand who would win the seventy-sixth edition of the Festival. It seemed like a harmless, almost frivolous exercise. And in part it was. But what emerged from the analysis tells something much more serious: it tells how a machine reasons, where it goes wrong, and why you should distrust anyone who proposes the same recipe applied to your company data. AIs are not oracles. They are sophisticated mirrors that reflect patterns from the past. In Sanremo, the price of error is a wrong prediction. On your data, it could cost much more.

How a machine reasons: the Gemini method

It all starts with a series of precise instructions. We asked Gemini to analyze Auditel data, streaming flows on Spotify and Apple Music, YouTube views of official video clips, Google search trends, partial votes from institutional juries, winners from the last ten years, bookmakers’ odds and, a detail that reveals how sophisticated the model is in its approach, the authors and producers of the winning songs in the last decade. The idea was to build not just a photograph of the present, but a map of the DNA of Sanremo success.

Gemini’s response was not a simple “in my opinion X wins”. It was an analysis report structured into ten sections, with comparative tables, weighted weights for each jury and even a longitudinal study of the evolution of the Festival from 2016 to 2025. The tone was that of a high-level financial analyst, not a pop music enthusiast.

The model correctly identified some of the forces governing the competition. It reconstructed, for example, how Mahmood’s victory in 2019 with “Soldi”, thanks to the production of Dardust and Charlie Charles, sanctioned the dominance of streaming over physical sales and inaugurated the era in which “indie” and urban pop stop being a niche. It traced the trajectory of Måneskin in 2021, the triumph of Mahmood and Blanco in 2022 with “Brividi” (streaming record in the first 24 hours), up to Olly’s victory in 2025 with “Balorda Nostalgia”, built on the delicate balance between acoustic guitars, piano and layered electronic beats.

From historical analysis, Gemini extracted an almost mathematical law: the winning song is never the result of a solitary intuition, but the result of a production architecture designed with surgical precision. And it applied this law to the 2026 cast, discovering an oligopolistic system in which a few authors—Federica Abbate, Alessandro La Cava, Davide Petrella, Dardust—sign multiple songs in the competition simultaneously, like a record label betting on multiple horses in the same race.

The voting mechanism in the final was broken down into three vectors: the Televote (34%), the Press Room Jury (33%) and the Radio Jury (33%). A system designed, at least on paper, to punish extreme candidates, strong in only one category, and reward multi-factorial consistency. Gemini understood this well.

We know that every year Sanremo’s winners come with their own collection of theories, backstories and corridor whispers. Ours is a data analysis. The rest we happily leave to gossip.

The prediction: three candidates, an impeccable logic

After sifting through Auditel data, Spotify charts, YouTube trends, plays monitored by EarOne (the leading Italian platform for real-time monitoring of radio and television plays) and bookmaker odds, Gemini produced an articulated, coherent, internally impeccable prediction.

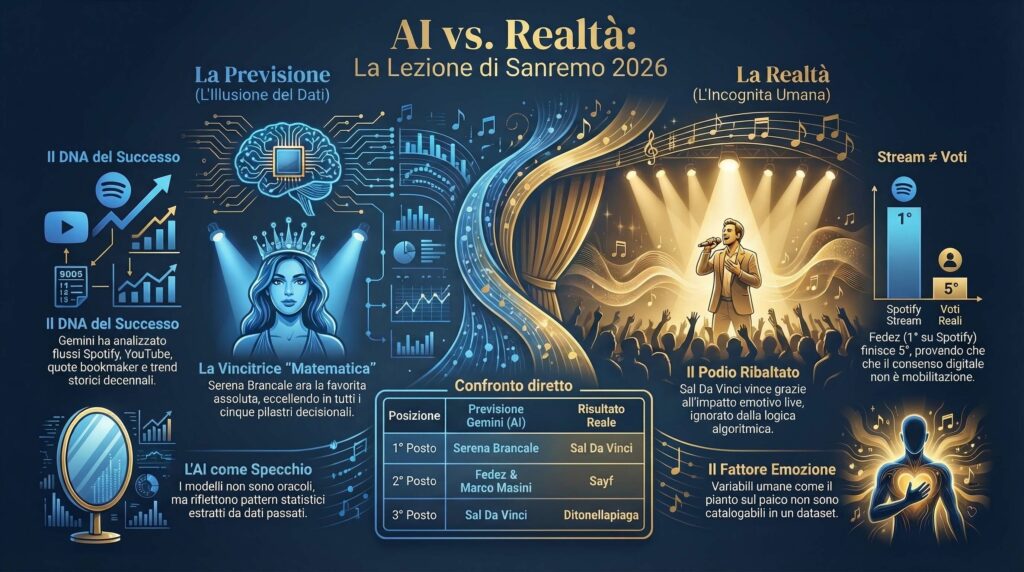

Predicted Winner: Serena Brancale. The singer-songwriter from Puglia was, according to the model, the only artist capable of recording excellence on all five decision-making pillars: in the qualitative Top 5 of the Press Room since the first evening, solid in the aggregated Top 5 of Televote and Radio, second in visual impact on YouTube, leading the charts of new radio entries tracked by EarOne, unanimously supported by bookmakers with odds between 2.85 and 3.50. Her song, an elegy dedicated to her mother, had won a standing ovation that, Gemini wrote, “escapes purely numerical measurements but certifies a tangible emotional triumph.” The model had crowned her with the same certainty with which a computer from the 1970s declared it had solved the problem of chess.

Predicted runner-up: Fedez & Marco Masini. The unprecedented partnership was described as “the tactical and marketing masterpiece of the 2026 edition”: absolute first on Spotify with about one million daily streams, present in the Top 5 of the Press and in that of the Televote, the song signed by the Abbate/La Cava duo, two of the restricted group of serial winners. The song addressed emotional vulnerability and fatherhood, themes that, as the model rightly noted, generate a higher sharing rate than the classic theme of love.

Predicted third place: Sal Da Vinci. Here Gemini showed partial lucidity: it recognized the sociological phenomenon of the Neapolitan singer-songwriter, absolute first on YouTube with over 649,000 views, a song produced by the same authors as Fedez/Masini, but relegated him to bronze, convinced that the Press Room would build an insurmountable wall against his neo-melodic aesthetic. A prejudice, it would turn out, not entirely well-founded.

Also noteworthy were the other names included in the hypothetical top five: Arisa, locked in by critical favor but with relatively lukewarm streaming; Sayf, the Gen Z underdog with almost one million daily listens on Spotify but ignored by the Press Room.

What actually happened: prediction vs. reality

And then the final arrived. And reality decided, as always, not to read the analysis reports.

Sal Da Vinci won with 22.2% of the final weighted score. Sayf came second with 21.9%. Ditonellapiaga third with 20.6%. Arisa fourth with 18.9%. Fedez and Masini fifth with 16.5%. And Serena Brancale, the “designated winner” with almost absolute statistical certainty? Ninth.

However, these results must be read with honesty, without turning the post-mortem into a simple trial of errors. Because Gemini hadn’t gotten everything wrong. Four of the five artists in the actual top five had been identified by the model as relevant candidates; Sal Da Vinci, Sayf, Arisa, and Fedez/Masini were all in the pool of those analyzed carefully. The problem was not the map of the territory, it was the scale of probabilities. Like a meteorologist who correctly predicts the fronts at play but gets the trajectory wrong, Gemini had seen the players but misunderstood the forces that would move them.

Where, then, did the model miss the target most spectacularly?

The first error concerns Sal Da Vinci and the Press Room. Gemini had assumed that journalists would penalize the Neapolitan neo-melodic song. It didn’t happen that way. Sal Da Vinci’s live interpretative power generated a transversal empathy that convinced the critics to reward him despite initial aesthetic reservations. In the pure televote, moreover, he had lost: Sayf had obtained 26.4% against 23.6% for Sal Da Vinci. His final victory happened precisely thanks to the support of the press and radio, exactly the opposite of what the model predicted.

The second error concerns the conversion of streams into SMS votes. Fedez and Masini dominated Spotify with about one million daily listens, yet in the final they collected only 11.9% of the televote. Those who listen to a song on streaming don’t necessarily vote, and a digital fanbase is not automatically a mobilized fanbase. It’s a distinction that seems obvious but that predictive models tend to ignore because streaming data is numerically seductive, easy to measure, and difficult to interpret in the right context.

The third error, perhaps the most instructive, concerns the bookmakers and the trap of collective confirmation. Betting agencies had Brancale as the clear favorite. Gemini believed them. But bookmakers operate on money flows that reflect the media perception of the first nights; they tend to photograph initial enthusiasm rather than the dynamic evolution of consensus on subsequent nights. Brancale’s final result in ninth place is proof that not even the market, with all its presumed capacity to aggregate information, can predict the emotional complexity of a television final.

Ditonellapiaga, excluded from the predicted podium because she was considered strong on the radio but weak on the televote, instead managed to merge the two dimensions: 18.9% in the televote combined with huge radio support brought her to actual third place. The model had identified her as an excellent outsider but had underestimated the ability of a strongly rhythmic song to win over the home audience once the final night arrived.

The oracle and your data: why this really matters

Sanremo was a game. But the mechanism that produced these errors is not exclusive to Sanremo. It is the mechanism of any predictive system based on artificial intelligence, applied to any domain: finance, marketing, human resources, sales forecasting, risk analysis.

AIs, including the most advanced models like Gemini, ChatGPT, or Claude, work on statistical patterns extracted from historical data. They are exceptionally good at recognizing regularities, compressing complexity, and identifying correlations that a human analyst would take weeks to find. Gemini’s report on Sanremo was, from this point of view, an impressive performance: it had precisely mapped the DNA of the winners of the last decade, identified the structural factors of success, and built a coherent and well-argued internal model.

The problem is not the quality of the analysis. The problem is that the future is not in the historical data. Or rather: historical data tells us what happened and under what conditions. It doesn’t tell us how new variables will behave—the live performance that generates an unexpected emotion, the moment when an audience decides to rebel against the logic of constructed approval, the mobilization of a fanbase that hadn’t been considered determined enough.

What Gemini couldn’t know, and what no model could have known before the final, is that Sal Da Vinci would cry on the Ariston stage, that that moment would go viral on social networks, that that emotion wouldn’t remain confined to the national-popular audience but would cross demographic barriers and convince even the critics to rewrite their aesthetic hierarchies. Human variables, those that emerge from the unrepeatability of a moment, are not categorizable in a dataset.

Gemini’s debrief on its own errors, which the model produced independently when we showed it the real results, was of rare lucidity. It precisely identified the three breaking points: the overestimation of bookmakers, the uncritical translation of streams into televoting power, and the aesthetic prejudice regarding the Press Room. It managed, in essence, to perform an excellent analysis of the recent past. But this is a different skill, and of different value, compared to predicting the future.

Here is the point that matters: if someone proposes an artificial intelligence system capable of “predicting” the behavior of your customers, the performance of your salespeople, the success of your next product, with the same confidence with which Gemini had crowned Serena Brancale, the right question to ask is not “how accurate is the model?” but “what data was it trained on, and what variables is it unable to see?”. Models give you results based on how they are instructed and what they are shown. Change the input data, and the predictions will change. Not because the model is stupid, but because no model sees what it hasn’t been given to see.

Artificial intelligence is a powerful tool for reducing uncertainty, not for eliminating it. Anyone who sells it to you as an oracle is doing marketing, not science. And the difference, when you base important decisions on it, can be very costly.

What would change for 2027: the model that learns

The final chapter of this story is perhaps the most interesting, because it shows exactly how a predictive system should evolve after a failure. We asked Gemini to build a strategic document for Sanremo 2027, and the model responded with a series of methodological corrections that serve as a general lesson.

For next year, the model suggests weighing the emotional impact of live performances much more than pre-Festival data: a standing ovation at the Ariston, Gemini understood, is worth more than a thousand streams on Spotify when the Press Room has to decide. It suggests treating streaming data as indicators of popularity and radio potential, not as a proxy for televoting strength. And it suggests looking much more closely at the identity of the new artistic direction; for 2027, with Stefano De Martino hosting and possible additions from the international music industry, the aesthetic coordinates of the Festival could shift towards more exportable and visually impactful proposals.

It is a process of iterative calibration. A prediction is made, errors are measured, weights are corrected, and it is tried again. It is no guarantee of success, but it is the only honest approach to complexity.

It applies to Sanremo. It applies to your data. It applies to any system where the future depends on human variables that no dataset has yet truly learned to measure.

*The Gemini analyses cited in this article were conducted in February 2026 during the evenings of the Sanremo Festival. The listening, streaming, and voting data are the official ones from the 2026 edition.