Forget the romantic image of a timekeeper pressing a stopwatch at the edge of the track, or a judge scribbling scores in a notebook.

The Milano Cortina 2026 Winter Olympics were not just a global sporting event. They marked a new ceiling for digital infrastructure — one of the most complex, distributed, real-time, mission-critical systems ever orchestrated at planetary scale.

While most viewers became overnight experts in curling, snowboard, and short track, anyone who writes code saw something else: a massive distributed platform. A real-time system composed of high-throughput computer vision pipelines processing tens of thousands of frames per second, hyper-performant cloud architectures, edge nodes scattered across the Alps, and Zero Trust security models designed to defend every single bit.

Timing at 40,000 Frames per Second

Let’s start with the mythical timekeeper — the anonymous hero whose reaction time could once influence an athlete’s destiny by precious hundredths of a second.

That era is over.

Historic watchmaking brands have evolved into applied-innovation labs. The Swatch Group — owner of brands including Rolex and Omega — has pushed sports timing into computational extremes.

Omega’s Scan’O’Vision ULTIMATE system captures up to 40,000 images per second.

For anyone working in computer vision, that number is more impressive than the race itself.

Forty thousand FPS means extreme throughput, ultra-low latency, and distributed processing. You cannot ship that firehose of data to a remote data center and hope for deterministic results. You need edge computing:

- On-site GPUs

- Hardware accelerators

- Optimized inference pipelines

- Deterministic latency guarantees

This is not video recording. It is real-time computational adjudication.

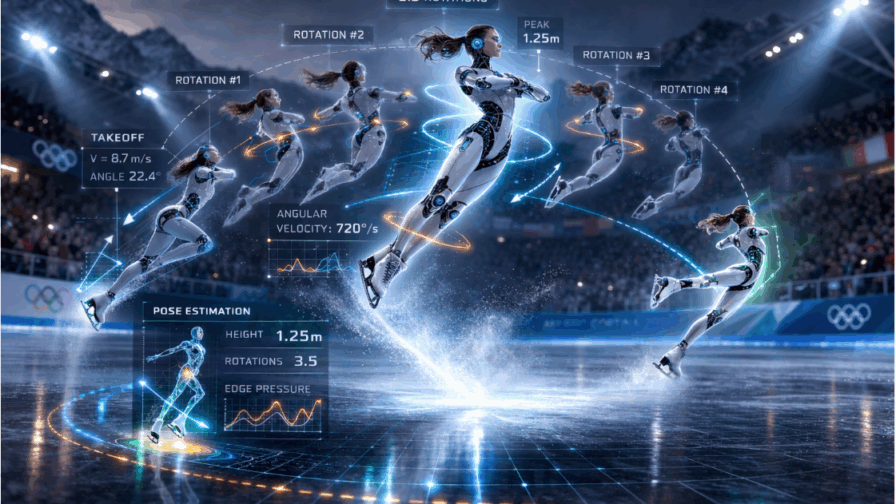

From Subjective Judging to 3D Geometry

Figure skating has always had a political undertone. The difference between a clean jump and an under-rotation can be a matter of milliseconds and degrees.

In 2026, pose estimation systems did not merely “track” athletes. They reconstructed 3D geometries in real time. They calculated:

- Rotation angles

- Take-off height

- Axis stability

- Number of revolutions

Subjectivity did not disappear, but it was drastically reduced.

In alpine skiing and ski jumping, computer vision systems generated near-instantaneous 3D motion maps. Biomechanical analysis became a live feature-extraction stream:

- Weight distribution

- Edge angles

- Optimal trajectory deviations

This was no longer post-event video review. It was live data analytics mid-competition.

Wearables in Extreme Environments

Wearables did not disappear — they evolved.

In training environments, smart clothing and ruggedized biometric devices monitored physiological parameters under extreme conditions. At –20°C or –30°C, consumer smartwatches are useless. These devices are engineered for thermal resilience, shock resistance, and sustained telemetry transmission.

The hardware problem is as serious as the software one.

Real-Time Data Engineering: CI/CD for Performance

Collecting data is trivial. The real differentiator is latency between acquisition, processing, and decision-making.

In bobsleigh and skeleton, analysis between runs is a race against time. Push-line metrics, aerodynamic efficiency, micro-trajectory deviations — all streamed into cloud platforms such as Snowflake, processed, and fed back to coaches almost instantly.

It is a continuous feedback loop.

If we borrow language from software engineering: it is CI/CD applied to athletic performance. Each run is a release. Each adjustment is a patch.

Even curling — arguably the most underestimated sport from a tech perspective — hides serious engineering. “Smart stones” integrate sensors measuring trajectory, rotation, and velocity. Under-ice detection systems automatically determine hog-line violations.

Understanding the sensor-to-server pipeline is often easier than understanding curling’s rulebook.

Augmented Spectatorship

The result of this data avalanche is not just more precise refereeing. It is augmented storytelling.

Graphic overlays. Vector projections. Real-time physics simulations. The ice becomes a dashboard. A computational canvas.

When athletes like Alysa Liu perform, motion traces and trajectory projections turn performance into a data-augmented narrative. Watching routines set to Donna Summer now comes with embedded kinematics.

You cannot unsee it once you have seen the data layer.

Cloud Broadcasting: The Control Room Goes Virtual

On the broadcasting side, the transformation was quieter but just as radical.

Olympic Broadcasting Services (OBS), the host broadcaster owned by the International Olympic Committee, has progressively virtualized production workflows.

Traditional OB vans — massive trucks packed with switching consoles, mixers, and racks of hardware — are being replaced by virtual OB vans in the cloud.

Instead of transporting tons of equipment:

- Cameras and encoders remain on-site

- Video/audio feeds are transmitted over high-bandwidth IP networks

- Production (switching, mixing, graphics, replay) runs in remote cloud instances

Operators interact with software interfaces that emulate physical consoles. Infrastructure is typically provisioned on hyperscale cloud platforms such as Amazon Web Services or Google Cloud.

The shift is structural:

- CAPEX-heavy truck fleets → OPEX, pay-as-you-go cloud usage

- Physical constraints → elastic compute scaling

- On-site crews → distributed production teams

For anyone in DevOps, it reads like a wish list: distributed infrastructure, elastic workloads, orchestration of ultra-high-bandwidth streams.

Add to that:

- FPV drones chasing athletes downhill

- 360-degree replay systems stitching feeds from dozens of cameras

- Freeze-frame volumetric reconstructions rendered in near real time

All powered by high-performance cloud pipelines.

On Olympics.com, conversational assistants allowed users to query statistics and regulations in natural language — another application layer integrating NLP models, caching layers, and structured databases in a tightly coupled ecosystem.

Zero Trust: Security as Architecture

An infrastructure this distributed inevitably expands the attack surface.

Video streams, biometric data, telemetry, cloud APIs — every node is a potential entry point.

The Zero Trust paradigm is no longer optional.

There is no secure perimeter. Every device — camera, drone, encoder — must authenticate continuously. Networks are micro-segmented. Privileges are minimized. Access is validated in real time.

Vendors such as Hewlett Packard Enterprise and Juniper Networks have contributed to architectures where security is native, not retrofitted.

AI systems monitored traffic patterns, detected anomalies, and mitigated potential DDoS attacks in real time. A disruption during an Olympic final is not just a technical issue — it is a global incident.

Defense had to be proactive.

Ironically, the most visible failures during the Games were human errors. The infrastructure itself held.

Code Is Part of the Team

What remains underappreciated is the invisible layer.

Behind every hundredth of a second:

- An optimized model

- A refined query

- A more efficient pipeline

Behind every immersive replay:

- A scalable workflow

- A cloud orchestration strategy

Behind every secure stream:

- A Zero Trust architecture designed for millions of concurrent requests

When we turned on the TV or opened a stream, we saw snow, ice, and adrenaline. Beneath that surface was a global software platform silently orchestrating data, video, and decisions in real time.

We celebrated athletes like Francesca Lollobrigida and Jordan Stolz. But behind every medal was a distributed system operating at industrial scale.

Maybe one day there will be a medal for the fastest query, the cleanest data pipeline, the most resilient architecture.

And if that happens, it should not be gold.

It should be copper — the metal that carries the signal.