Artificial intelligence systems have become more capable over the past few years. These systems have made the world more efficient and a lot richer. With this technology, machines can think like humans and can mimic the actions of people. This has enabled high levels of automation and has helped companies save a lot of money. While AI can be extremely useful, it comes with various ethical issues. We shall explore these concerns in this guide about some of the main challenges and concepts of ethics in artificial intelligence.

Current Situation of AI Development

A good proportion of businesses today use artificial intelligence to run their operations. This technology has made its way into various enterprise applications, including customer relationship management and workforce productivity. Investment in this technology has grown to over $70 billion, and AI experts have become some of the most sought-after professionals in the world. Voice-based assistants are the most common forms of AI, and they are used in a wide range of industries. These industries include automotive, IT, and retail.

Lots of companies also use chatbots for customer support. These bots help to improve customer satisfaction, and they save companies a lot of money as they don’t need to hire customer service assistants. Here are other popular uses of AI systems:

- Personalised shopping in online stores

- Forecasts for financial services

- Network feeds on social media sites

- Security systems and face detection

- Anti-virus threat detection

- Warehouse management

- Safety in the automotive sector

- The internet of things

Augmented intelligence is an emerging form of AI, and it aims at enhancing human intelligence. Edge AI is also being developed and will make it possible for AI algorithms to work locally without the need for an internet connection. For example, some forms of facial recognition are able to process data without the need for internet access.

Ethical issues in technology

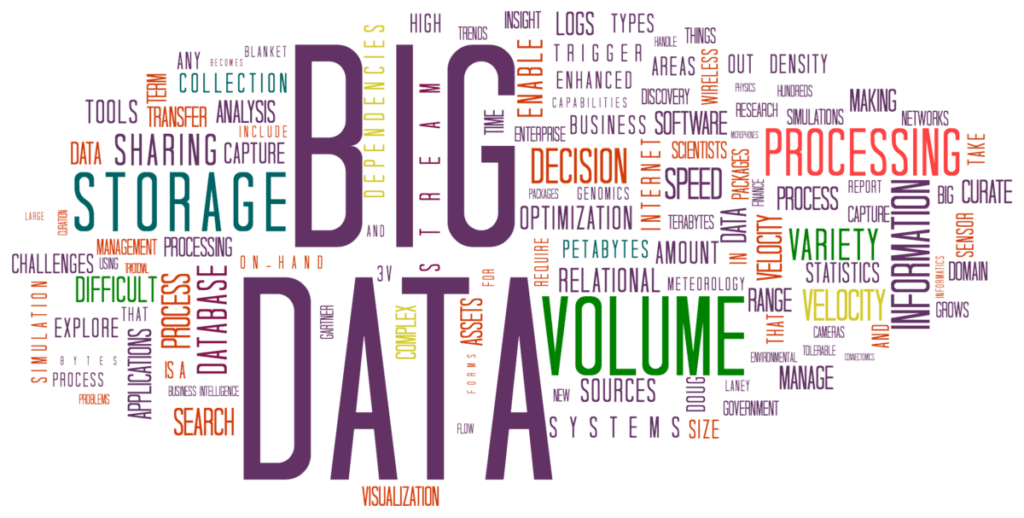

Businesses often strive for ethics in decision-making and practices. We often look poorly at brands that don’t take ethics seriously and most people wouldn’t be happy to work with such companies. Since it is becoming a key part of our lives, it is important to consider ethics in artificial intelligence. With technology, businesses can collect and store users’ information. Whenever you browse internet sites, buy things online, and enter your information on social media sites, you are constantly providing personal data to companies.

While this data is primarily meant to personalize our experiences on various sites, it can easily be misused or lost. Data has become the new gold, and many companies make money by selling their customers’ information. For businesses, it is extremely valuable to know which products are being searched for and what customers prefer. Politicians also need to know which social issues are getting the most attention. These issues have created an entire market where businesses can buy and sell customer information.

While technology helps companies cut down on their staff, it can end up making certain jobs obsolete. This can make workers less confident in their job security. It is worth noting that advanced AI will be able to handle skilled jobs like accounting and blogging, so it’s not just low-level tasks that will be affected.

Ethics in Artificial Intelligence

Artificial intelligence offers lots of benefits to society. However, it also presents various dilemmas in ethics. These include the risk of user privacy, complexity in neutrality, and the issue of unconscious bias.

Recommended video: What is Machine Learning Fairness?

1. Risks to User Privacy

Companies feed a lot of data into their AI systems, and this information is susceptible to data breaches. The loss of data can lead to identity theft and other serious issues. It is also possible for artificial intelligence systems to generate personal data without the permission of individuals. Another form of AI that intrudes on user privacy is facial recognition. This form of technology can identify individuals without their consent. It involves using real-time public surveillance or aggregation of databases that are usually not legally construed. It can be used to track a person’s movement through a city where there are surveillance cameras. Facial recognition technology is considered illegal in many parts of the world as it invades people’s privacy. Health tracking is also an ethical issue in AI, and this was a major concern around the world during the Covid-19 pandemic. Overall, artificial intelligence raises the analysis of personal information to new levels, and this intrudes on personal privacy.

2. Complexity of Neutrality in Technology

AI has traditionally been considered neutral technology, but this has proven to be far from reality. This technology can easily mismatch individuals because of their race or other characteristics. It is also used in granting loans and can discriminate against people because of their gender or race. For example, a man and woman with the same credit history and income may get approved for different sizes of loans purely because of their gender. Complexity in neutrality emerges because humans unknowingly transfer existing stereotypes through the data collection process. The developer may also apply their own biases when programming the systems. This is a major ethics concern as artificial intelligence is used to make decisions in many areas of our lives. It is applied in the health sector, financial sector, and other crucial industries. It can even filter out certain candidates for jobs because of this bias.

3. Unconscious Bias in Data

Bias in artificial intelligence occurs when the results cannot be generalized widely. This issue can result from preferences or exclusions in training data, but it also emerges from how the data is obtained and how the algorithm is designed. For example, a researcher can collect data on the heights of people in a city. This information can present unconscious bias depending on the people who were surveyed or the time the data was collected. If a data set has a bias, artificial intelligence systems will learn while applying the bias. Unconscious bias can present ethical issues as it can lead to discrimination. For example, a company can use AI to improve diversity in the hiring process. However, since the system learns from the data its fed, it will end up favouring certain applicants based on demographic groups. Legal systems can also try to create more standard and fair sentencing guidelines by training AI machines, but these are still likely to give harsher sentences to people of specific groups.

4. Why do we keep replicating culture and society topics?

AI systems end up replicating societal norms since they are meant to think like humans. For example, machine learning models for translation have been noted to associate male names with words like ‘salary’ or ‘professional’, while female names have been associated with terms like ‘wedding’. The algorithm is unlikely to be making the association on its own and may simply be trained on texts that reflect these gender tropes.

Possible solutions

Governments around the world have come up with legislation to protect customers from the misuse of their data. The European Union recently passed the General Data Protection Regulation (GDPR) law which outlines how companies should protect customer data. Websites operating in the region have to be compliant with this law to continue selling goods and services.

Bias in AI can be mitigated by using gender-neutral language when training the system. For example, instead of using the word ‘businessman’, you can use the term ‘businessperson’. This will make it harder for the system to discriminate against people based on gender. It is also necessary for companies to use diverse teams to train the AI system. Customers and users of the AI system can also offer feedback so that the developer keeps track of how it is performing. You should have a concrete plan on how to improve the model based on the feedback you receive from users. The creator of the model should also provide sufficient and quality information.