According to the software giants, voice-based UI and conversational AI represent the next-gen computing interface. After decades of lower-level approaches, including character-based, graphical, Web, and mobile interfaces, it’s time to use voice.

This is a huge advance in the way we interact with computers. Unlike menus, touchscreens, or mouse clicks, voice conversation is one of the most natural ways to communicate; it requires no learning curve if the listener is clever enough, but it has some drawbacks, too.

Voice has been dubbed as a ready-to-use interface more than once in the past, but now, thanks to the voice-based UI (or VUI, Voice User Interface), it is time for this to become reality. Current technology offers both an AI back office to cope with the meaning of each language, and a hardware-driven ecosystem hungry for applications. In the voice paradigm, the situation is central to both sides of the conversation.

Amazon’s evangelist at Codemotion Milan 2019 was Andrea Muttoni, the Italian Senior Solution Architect at Alexa, who is also a VUI technology evangelist. Andrea is also a musician – he enjoys composing music, another way to approach alien languages.

VUI: situational Design is the masterplan

A comparison between audio and video experiences can help us through the VUI world. We often think as if every programming pattern should please a human being, but this is not the truth. Let’s compare video and audio content: video expects uniformity, while audio needs variety. With Alexa, as with any other voice processing device, you have to write for the ears, not for the eyes.

Voice makes it easy to build a mock conversation, a true script, to exploit the potential of the situation. It’s necessary to take a step back from the basic programming and focus on the situation: that’s why Muttoni prefers not to call this voice programming, but “situational design“, SitDes in short. His speech in Rome was entitled “Situational Design – a New Way to Design for Voice“.

Thinking about old IVR designs now that we have the certainty of no nesting, no flowcharting… what a change! “SitDes is what we see everyday”, says Muttoni; “it’s not an obligation, but nothing more than a proposal, coming directly from the experience of the Alexa staff”.

This change can be good, or bad. SitDes asks us to be crystal clear, yet we humans sometimes ask imprecise questions; sometimes we use interchangeable words and phrases to say the same thing, sometimes we tell jokes. A well-designed voice experience becomes a conversation when it takes all of these elements into account.

Natural voice variations

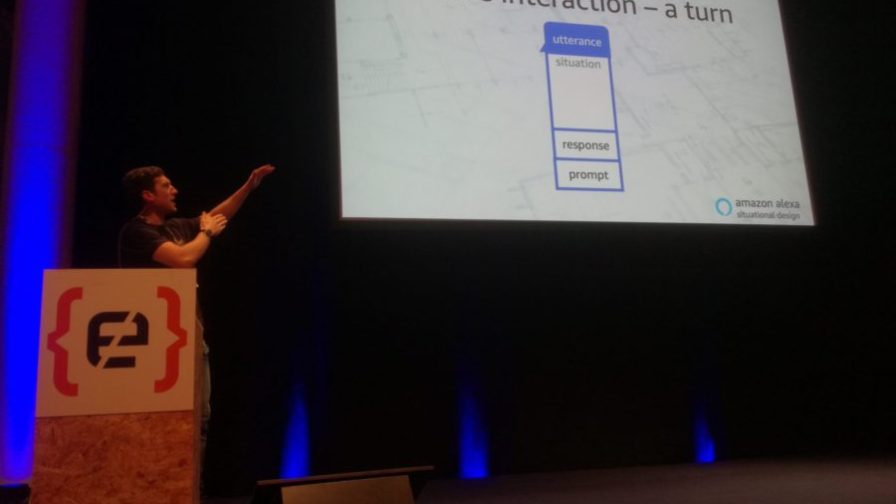

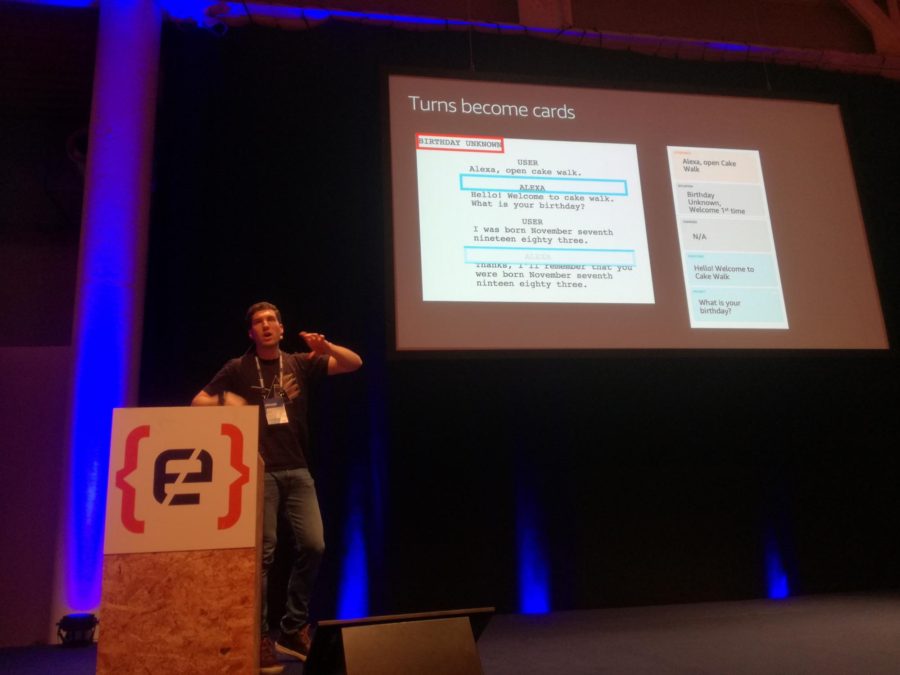

The building block of this new approach is the situation card, bringing together four basic elements: utterance, situation, response, and prompt.

The utterance is what the user says; the situation is the context; the response is what Alexa says immediately; the prompt is what Alexa asks.

One card describes one situation, while multiple cards can be combined to build a full storyboard.

More cards for the same situation can be created inside a single storyboard. These are called variations and are very useful when it comes to having a natural conversation.

Variations are very interesting, as they model a true conversational approach. The voice assistant can switch to a “courtesy mode” if the software detects the person’s voice is more stressed than usual. Children can interact with a “child mode”; all voices could be modelled on the voice of a VIP or champion or any other person. Multilingual systems are also being pursued.

“We really believe that voice programming will be one of the new big things in VUI development”, states Muttoni. This wave has to be supported, so “Amazon is not charging anything for software development in order to help to develop a strong community and many use cases”; for any success stories that emerge “many resources will be needed from the pool that Amazon sells regularly”.

The whole process is streamlined and easy to implement, also thanks to an online guide provided by Amazon. The four phases of Alexa skill development are design, build, test, and certificate, and all are free. Parts of these can be implemented as lambda functions, exploiting the serverless business model.

The Alexa trend also shows a significant increase in the variety of hardware devices available. In this case, the verification process involves a small fee paid to the service company that performs the certification.

Most new devices are embedded in IoT mobile devices, so a completely new family of services based on navigation APIs will soon hit the market. Here Technologies, heirs to Navteq and Nokia development, will be one of the keys to this new market. Car assistants form the second wave of voice-enabled mobile devices, following smartphones.

At Codemotion Milan 2019, Michael Palermo’s speech, “Integrating Location in Conversational UX“, helped developers to understand and integrate map data and related location services. Before working at Here Technologies, Michael evangelized the “smart home” concept at Amazon as part of the Alexa team. VUI and voice-enabled solutions also need a different approach to localization.