When we look at Unity, it is clear how far it has come in 15 years. From humble beginnings, to a powerhouse that can run and render incredible games, short movies, and other experiences. However, as the scope and size of the projects people create with Unity grows and grows. It has become clear for us that we need to evolve Unity to a different level.

This evolution comes in the shape of what we call the Data-Oriented Tech Stack or – in short – DOTS.

But… presentations first! My name is Ciro, and I work at Unity as an evangelist. It is my job to travel to conferences and keep people updated on the latest Unity trends and technology. As DOTS is a technology most interesting to programmers, this year I chose Codemotion as the perfect venue to talk about it.

Performance by default

You probably heard this sentence before, “Performance by default”, as it’s one of the central concepts of this evolution. We are aiming to build a new Unity where not only we unlock new levels of performance, but we do it “by default”. This means that whatever you do your game will run faster than before, and you will have to spend less time building strategies to optimise your game.

Unity’s co-founder and CTO, Joachim Ante, introduced this concept for the first time around GDC 2018. The whole first part of this video outlines very well the founding principles of DOTS:

https://www.youtube.com/watch?v=aFFLEiDr3T0

C# Job System

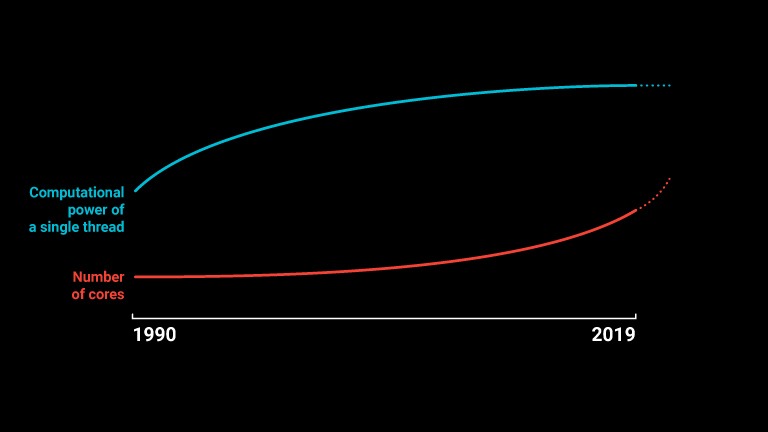

We are at a point in history where we know we cannot count anymore on single processor threads to become faster, because of physical limitations on how they are built. As such, fast computing relies on processors with multiple cores. Programmers who go this route must then deal with multi-threading.

Unity’s C# Job System is like a window into the underlying C++ Job System, which has powered the Unity engine for quite a while now. This means that your C# code will be run alongside Unity’s own tools, to fully utilise all of the CPU threads to their full potential. This is in contrast to a situation where regular C# code is generally single-threaded, which is the case for almost all Unity games today. In fact, many Unity games are “CPU-bound”, meaning that the GPU is waiting because the CPU is overloaded, causing drops in framerate.

The Job System also features a robust safety net, which catches race conditions before they happen by analysing how jobs access memory, and produces warnings in the console. This allows you to clearly see where the problem is, rather than just watch your game crash with no explanation.

Burst Compiler

This new compiler goes hand-in-hand with the C# Job System, and makes it even faster. In short, it takes C# jobs and produces highly-optimized machine code, compiled differently for different CPU architectures to take full advantage of each.

Compiling jobs with Burst makes them much faster than before, sometimes even faster that equivalent code written in C++. Explaining how Burst does this is beyond the scope of this short piece, so I invite you to read this great blog post on the subject by Unity Technical Director Lucas Meijer.

Entity Component System

When talking about unlocking performance, another piece of the puzzle is memory layout.

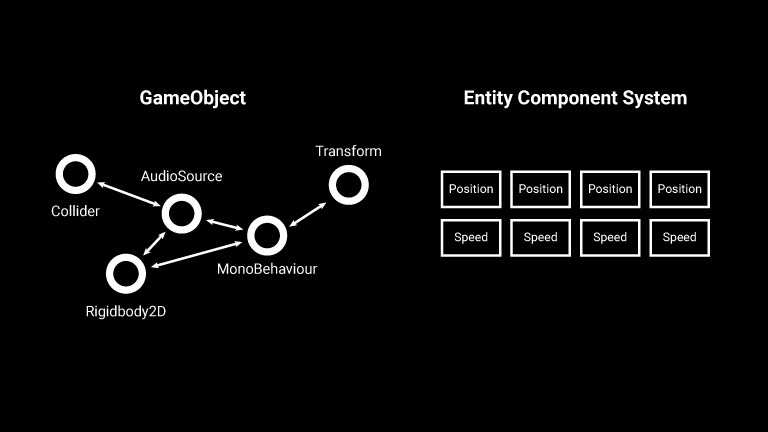

When working with Gameobjects in Unity, we have a situation where each of them is stored somewhere in the main memory (the RAM). All of their Components are somewhere else in memory, not necessarily in a nearby location. So all of the parts that you need in a certain game routine can be spread all over the memory.

When a game runs, the CPU is continuously fetching data from the main memory to bring it to the CPU cache and operate on it. As such, if these fetch operations only find very little useful data (because it’s spread apart) then your game is wasting a lot of CPU time. Now multiply this by every single operation in your game, and you can see why this can limit the amount of things that can happen while keeping a decent framerate. This not only affects gameplay, but also the size of your game, since it is possible that you can’t “stream in” objects and levels as fast as you would need. Can we fix this?

First, Gameobjects are no more. In place of them ECS has Entities, which are not much else than an ID in an array that belongs to an “Entity Manager”. Differently from Gameobjects, you don’t do the work on individual entities, but you operate on them in bulk.

Components still exist, and largely have the same role that they have had until now. They add qualities to objects, and you can attach and detach them at will, just like before. This means that ECS retains the flexibility of composition which characterised Unity since the start. The difference: ECS Components are only data, no logic.

Now for the good part. In ECS, Entities that have the same Components are grouped into what we call Archetypes. Components of objects that share the same Archetypes are packed into memory next to each other (as in the image above, on the right). This change alone makes it so that when a piece of code is iterating on several objects, it can efficiently find them and move them in bulk to the CPU cache, operate on them, and move on.

This is possible because logic is not on the individual object itself, like it used to be for MonoBehaviour scripts, but rather in a separate location: Systems. Systems are scripts that explicitly declare which entities they are interested in by filtering the game world by Archetype. Much like a query on a database, this enables them to find all of the entities they need without costly GameObject.Find or GetComponent calls.

To recap: Gameobjects become Entities, Components are still present (but are now only data), and instead of Monobehaviours we now have Systems. Easy, right?

This seemingly simple sentence obviously has big implications on the way we will create games in Unity, and on the direction Unity is taking. There are also other sides (and benefits!) to it that I can’t cover here in the space of one article.

The future of Unity

If you are interested to learn more about DOTS, we have a landing page on the Unity website. Also, DOTS will be a centerpiece of the upcoming Unite Copenhagen event. Even if you are not going, you will be able to catch the keynote online on YouTube on the 23rd of September.

After that, I will be going into more detail about DOTS at both Codemotion Milan (Oct 24th-25th) and Codemotion Berlin (Nov 12th-13th) 2019. I will be hosting a talk and a Lab at each location.

You can already book a spot at the Labs in Milan here and Berlin here.

DOTS brings big changes to Unity, and it might take a while before all of these pieces fall into place properly. However, even if you won’t use DOTS in your projects now I suggest to take a look at it to be ready for the future.